Why OpenAI's Privacy Filter Isn’t Enough for Production Data Masking

.png)

By Ben Shaw, Head of Engineering

Last week, OpenAI released Privacy Filter, a 1.5B-parameter open-weight model that detects and redacts PII on a laptop or in a browser, scoring 96% F1 on the PII-Masking-300k benchmark.

In early May, we'll ship the DataMasque Local AI Engine, our self-hosted PII detection product that is an optional add on for customers. It runs in your own environment, whether that’s AWS, Azure, GCP or on-prem. All it needs is a GPU, and no data leaves your network.

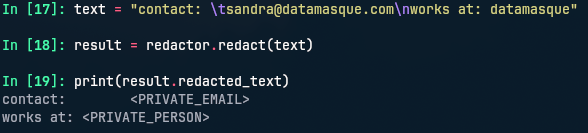

A note on terms first. Redaction means removing or blanking the PII and that's where Privacy Filter and similar models stop.

When using DataMasque, we mask by replacing the sensitive information like PII, PHI and payment card information with synthetically identical data - realistic values that preserve referential integrity across every table, file and format they appear in, so the data can be used for testing, analytics and AI workloads.

Privacy Filter does have its use cases. It shines in limited-resource environments where blanking out PII is enough and realistic substitutes aren't needed. Things like scrubbing log files or sanitizing LLM prompts on a developer's laptop. DataMasque is the right tool when you’re handling enterprise datasets and the masked output requires realism, consistency, referential integrity and an audit trail.

Why a model isn't enough

In a technical sense, Privacy Filter is context-aware in that it uses surrounding text to know it should flag the ‘Mark’ in ‘Hi, Mark’ and not ‘mark’ in ‘you got a high mark’. It does that well, and the 96% F1 on PII-Masking-300k is a real result.

However, useful data needs a different kind of context: knowing whose PII is on the page. A clinical note that mentions both a doctor's name and a patient's name produces two private_person tags from Privacy Filter, with no way to tell them apart. The taxonomy is fixed at eight labels, whereas DataMasque supports an unlimited number of custom entities that you define, and ships with 21 HIPAA types out of the box. OpenAI's own model card states: "The model will only identify personal data spans that match the trained label taxonomy…" A static taxonomy is by design and it’s not possible to configure your way around it.

OpenAI has also documented other limitations in this same card:

- Under-detection of uncommon names and domain-specific identifiers.

- Over-redaction of public entities when context is ambiguous.

- Performance drops on non-English text.

In our testing, it even thinks DataMasque is a person!

No model is reliable on algorithmic or checksum-based identifiers. A credit card number is a credit card only if it passes the Luhn check. A Brazilian CPF, Australian Business Number and New Zealand IRD all have their own checksums. A model can recognize that a 16-digit string looks like a card, but it can't confirm it.

Likewise, models won't reliably detect your internal employee ID format (say, two letters plus six digits) without being told it exists. Same for any set of internal terms sensitive in your context.

We solve for this by augmenting model detection with four other detectors specifically for these types of PII.

Five matchers

The model handles open-ended, contextual cases: person names, locations, addresses and free-text dates. Labels are customer-defined, so compliance teams can configure pairs like PATIENT_FIRST_NAME and DOCTOR_FIRST_NAME (or anything else they need) and apply different masking rules to each.

Around it sit four other matching approaches:

- Regex for deterministic shapes (emails, URLs, IP addresses and postal codes).

- Checksum for structured identifiers (credit cards, IBANs and tax numbers). When we mask the value, we generate a new valid checksum so downstream validation still passes.

- Related sources, matching against PII already in your own database. If a patient_id exists in a structured column, we also find everywhere it occurs in unstructured text.

- Seed files, your enterprise’s unique terms, like project codenames, internal brand identifiers, and the niche customer names nobody else's training set knows.

Consistency

Our consistent masking also applies to unstructured masking.

When masking structured data in systems like PostgreSQL or MySQL, we generate consistent synthetic identities - replacing real names, account numbers and other identifiers with realistic substitutes that maintain referential integrity across tables and databases.

Those same synthetic identities carry through to unstructured data. If John Doe becomes David Carter in the CRM, that same identity appears in call transcripts, application logs and every other unstructured source referencing that individual - keeping relationships between systems intact and preserving the context AI models depend on.

This cross-system consistency is something Privacy Filter and other PII detection models and LLM-redaction proxies we've evaluated can’t address.

Cost

A 10 GB workload through Claude APIs (which can be run on AWS Bedrock for privacy) costs around $37,500 USD for synthetic generation ($15 per million output tokens, since the model is mostly producing) or $45,000 for detection ($18 per million blended, since input and output volumes match).

The same 10 GB through DataMasque's Local AI Engine on a single $0.80/hour AWS GPU instance is roughly $1,300, about $0.50 per million tokens - operating in your environment, with the same compute and with no data leaving your network.

For a regulated enterprise who needs de-identified data for AI use cases, such as model evaluation or experimentation, enterprise-scale datasets don’t fit a per-token budget.

DataMasque prices per environment, allowing you to mask petabyte-scale datasets without cost consideration.

What production actually requires

No LLM-based tool can achieve the accuracy and scale that is required for enterprises.

Here are some of the other capabilities we've built to deliver synthetically identical data at enterprise scale:

- A customer-deployed REST service with a web UI.

- Support for Oracle, SQL Server, DB2, Postgres and more on the database side; data warehouses; structured data (CSV, Parquet, Avro and more); semi-structured data (JSON and XML); and unstructured data (PDF, Word and text).

- Open-source CLI built for safe agentic access to DataMasque.

- An open-source Python SDK for ops automation.

- The cross-format consistency described above.

If you're exploring how to safely de-identify data for AI use cases, request a demo and we'll do a walkthrough tailored to your environment.

The DataMasque Local AI Engine ships in early May 2026.